When you listen to a song, you hear a finished blend. The producer made hundreds of decisions about how to layer, balance, and process individual audio tracks to create the mix you’re hearing. All of those decisions are baked into a single stereo audio file.

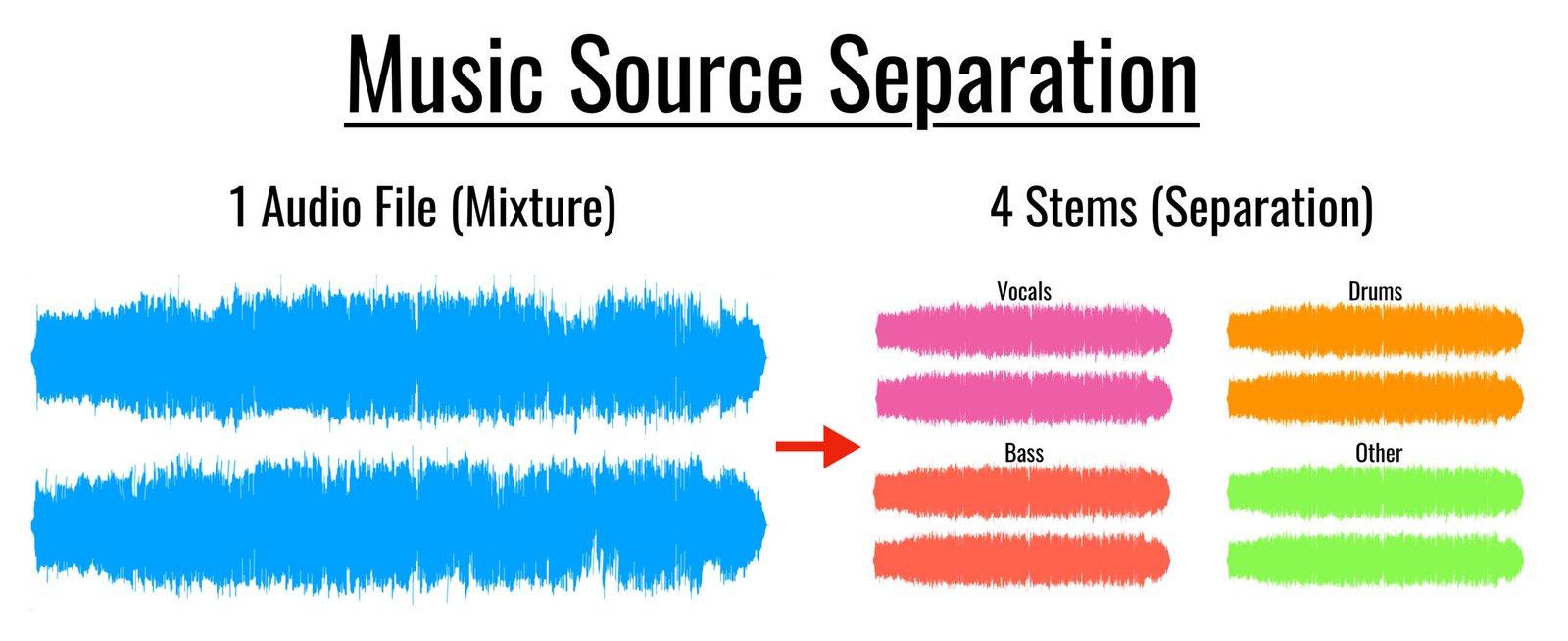

For most of music history, that process was one-way. Once a song was mixed, the individual parts were inaccessible unless you had the original session files. The ability to run a recorded song through software and pull out the drums, the bass, the vocals, and the instrumental elements as separate audio — that’s what AI stem separation does. And it works well enough now to be useful in real workflows.

Here’s what’s actually happening when the software does this.

The Problem AI Stem Separation Solves

Sounds mix together in a way that doesn’t leave a record of where each sound came from. When a guitar and a piano play at the same time, their sound waves combine. The combined waveform doesn’t contain labels indicating which part of it came from the guitar and which from the piano. What reaches a microphone — or what’s stored in a digital audio file — is just one combined waveform.

Traditional audio processing techniques can separate sounds based on their frequency content. If an instrument occupies a frequency range that other instruments don’t, you can filter the mix to remove everything else and leave it relatively isolated. But most instruments overlap in frequency. Vocals, guitars, keyboards, and many acoustic instruments all produce significant content in the mid-range frequency band. Frequency-based separation produces partial results at best and artifacts that damage the extracted audio.

Frequency filtering separates sounds that don’t overlap. AI separates sounds that do.

What AI Actually Does Differently?

An ai splitter trained on large amounts of music learns to recognize the patterns that distinguish different instrument types — not just their frequency content, but their rhythmic behavior, their harmonic structure, and how they change over time.

Vocals have specific patterns: they change pitch in ways that follow melodic movement, they have characteristic attack and decay shapes, they involve vibrato and breath sounds. Drums have different patterns: periodic transients, specific frequency signatures for kick, snare, and hi-hat, rhythmic structure that differs from melodic content. The AI learns these patterns from training data and uses them to make decisions about which parts of a mixed signal most likely came from which source.

This is more sophisticated than frequency filtering because it operates on musical knowledge rather than just signal properties. The AI isn’t just asking “what frequency is this?” — it’s asking “what kind of instrument produces sounds with these characteristics?”

What the AI Is Actually Calculating

The practical process involves representing the audio as a visual map of how frequencies change over time (a spectrogram), then using a neural network to classify which regions of that map belong to which instrument categories. The network has seen millions of examples of what drums look like on a spectrogram versus vocals versus bass, and it applies that learned pattern recognition to the new input.

The separated output is reconstructed audio for each isolated stem — not the original recorded tracks, but a version that the AI has calculated should contain the specified source with other sources removed.

How to Use AI Stem Separation Without Overestimating What It Does?

Understand that separation quality varies with the mix. Tracks with clear separation between instruments in the original mix separate more cleanly than dense productions where many elements share frequency content. Sparse arrangements — acoustic guitar and vocals, piano trio recordings — often produce cleaner results than heavily layered productions.

Use stems for analysis, not always for re-release. ai stem splitter technology is accurate enough for learning, transcription, and production study. The separated stems may have artifacts — faint bleed from other instruments, some tonal changes in extreme separation scenarios — that make them less suitable for direct commercial use in remixes or samples.

Apply it to your own sessions for post-production problems. If you have a mix where you realize a specific element needs to change but don’t have the original session, stem separation applied to your own recordings gives you the ability to process or replace elements that would otherwise require a full re-record.

Try it on recordings from the genres and eras you work in. Separation quality varies across musical styles and recording eras. Testing the tool on representative material from your workflow gives you an accurate sense of what to expect before relying on it for important projects.

Frequently Asked Questions

What is the fundamental difference between AI stem separation and traditional frequency filtering?

Frequency filtering separates sounds that don’t share frequency content — if an instrument occupies a range others don’t, you can filter to isolate it. Most instruments overlap in frequency: vocals, guitars, keyboards, and acoustic instruments all produce significant content in the mid-range band, so frequency-based separation produces artifacts that damage the extracted audio. AI separation learns to recognize the patterns that distinguish instrument types — not just frequency content but rhythmic behavior, harmonic structure, and how sounds change over time — which allows it to separate instruments that share frequency space.

How does an AI stem splitter actually process audio?

The practical process represents audio as a spectrogram — a visual map of how frequencies change over time — then uses a neural network to classify which regions of that map belong to which instrument categories. The network has been trained on large amounts of music and has learned what drums look like on a spectrogram versus vocals versus bass, applying that learned pattern recognition to new input. The separated output is reconstructed audio for each stem — not the original recorded tracks, but a version the AI has calculated should contain the specified source with other sources removed.

What are the practical limits of AI stem separation?

Separation quality varies with the mix: tracks with clear separation between instruments in the original recording separate more cleanly than dense productions where many elements share frequency content. Sparse arrangements like acoustic guitar and vocals often produce cleaner results than heavily layered productions. The separated stems may have artifacts — faint bleed from other instruments, tonal changes in extreme separation scenarios — that make them less suitable for direct commercial use in remixes or samples, though they’re accurate enough for learning, transcription, and production study.

The Practical Usefulness Right Now

AI audio separation has reached a level of quality where it’s genuinely useful — not as a perfect isolation tool, but as an analysis tool and a production aid. The gap between “the original tracks” and “what AI separation produces” is real but narrow enough that the technology serves most of the applications people actually need.

Musicians using it to study recordings, producers sampling it for creative inspiration, and engineers applying it to post-production problems are all getting real value from it. Understanding what it’s doing — and what its limits are — is what makes it useful rather than frustrating.